In the sphere of enterprise productivity, a significant transformation is underway, driven by the advent of Large Language Models (LLMs). These sophisticated models, equipped with advanced language processing skills, are reshaping the landscape of human-machine interaction. This article dives into the capabilities of LLMs and their potential to transform productivity across diverse environments. Through the lens of recent research and practical applications, we discuss the impact of LLMs, highlighting their potential to enhance human skills, optimize workflows, and pave the way for a new epoch of efficiency and innovation.

From scripted chatbots to Generative AI

Throughout this year, we have seen Generative AI explode in popularity, capturing the attention of business leaders, who are keen to understand how AI will transform and improve the way we work.

While the recent hype in Generative AI seems like a breakthrough, it is really a culmination of decades of innovation and progress. Computer scientists at MIT developed the first known chatbot, ELIZA, in 1966. Inspired by ELIZA, another chatbot, A.L.I.C.E., emerged in the 1990s based on the work by scientist Richard Wallace. These first-generation chatbots used keyword recognition and decision trees to create scripted answers.

Conversational agents emerged in the 2010s, including IBM Watson, Siri by Apple, and Alexa by Amazon, by way of more advanced natural language processing capabilities, such as the ability to process voice commands. Soon enough, Generative AI chatbots began to gain momentum in the 2020s, following significant leaps in research related to deep learning.

Notably, in 2018, OpenAI published a paper on the Generative Pre-Trained Transformer (GPT), a type of Large Language Model (LLM) based on research from Google. A few years later, in November 2022, OpenAI launched ChatGPT, an AI chatbot built upon GPT-3.5. Within two months, the software application gained over a hundred million users, and sparked competitors, such as Google and Meta, to ramp up the development of their own LLMs, PaLM and LLaMA, respectively.

What makes this wave of generative AI particularly significant?

Chiefly, the broad range of capabilities of chatbots powered by LLMs, as well as the ease of access. Through ChatGPT, for example, many workers have begun to use LLMs to boost productivity in a variety of tasks, from content generation to knowledge retrieval, all simply done by conversing. Recent research from OpenAI suggests that “about 15% of all worker tasks in the US could be completed significantly faster at the same level of quality” using LLMs. Realistically though, how will we get there?

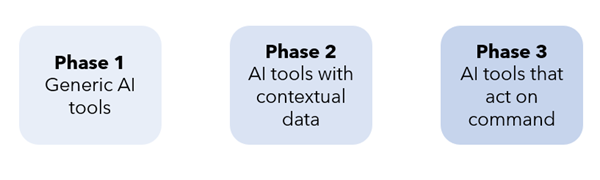

Cohere, an enterprise-focused generative AI startup, proposes a three-phase evolution of productivity centered on the development of knowledge assistants powered by LLMs.

We are in the first phase, where we have generic AI tools, but these tools lack any corporate contextual data. As soon as we build custom models with company data access, we enter the second phase, during which workers access a knowledge assistant to search and synthesize internal data. These increased capabilities will lead to significant gains in productivity through human-machine collaboration. Eventually, we will enter the third phase, at which point knowledge assistants can interact with enterprise systems on command.

Adding a layer of sophistication to LLMs

For the most significant gains in productivity, the key idea is that LLMs are not necessarily a means to an end; instead, they can serve as building blocks for creating additional tools. Through specialized applications, such as code generation, organizations can adopt LLMs into their workflows and improve efficiency.

GitHub’s CoPilot – an artificial intelligence tool that serves as a pair programmer – is such an example. In one study, researchers remark “software developers using Microsoft’s GitHub Copilot completed tasks 56 percent faster than those not using the tool.”

An interesting use case highlighted in a recent McKinsey report is the use of LLMs for legacy code conversion. CoPilot can explain code when prompted, thus allowing developers to analyze legacy frameworks through natural language translation. The translation of code into natural language allows developers to understand the business context of code more easily, as well as potentially facilitate the migration of legacy frameworks in a drastically more productive fashion.

The future of productivity

The gains in productivity go beyond software engineering. LLMs have the potential to increase productivity within many other domains:

- Research & Development: LLMs can be used to generate prompt-based drafts and designs, which would allow researchers to develop more designs.

- Legal: One could leverage LLMs to draft legal documents that incorporate specific regulatory requirements. These requirements can be carefully integrated through prompt engineering.

- Organizational workflows: Internal knowledge management systems can be elevated by “virtual experts” powered by LLMs. Through natural language queries sent to the expert, employees can retrieve internal knowledge, speeding up the process of information retrieval and fostering more autonomy in the workplace.

- Marketing and sales: Want to generate customized messages more easily and quickly? LLMs can create messages that cater to the profiles of individual customers. Additionally, these tools can perform various tasks such as generating initial drafts for brand advertisements, creating catchy headlines and slogans, crafting social media posts, and writing product descriptions.

Per a recent report by The Pew Research Center on how we may expect our digital lives to shift by 2035, one computational social scientist, Jeremy Foote, observes that LLMs are important tools for “empowering creativity through novel ways of thinking” and “improving productivity in knowledge work through making some tedious aspects easier, such as reading and summarizing large amounts of text.” By streamlining labor-intensive tasks, not only can employees become more efficient, but they can also shift their attention to value-added and strategic activities – a more implicit form of added productivity.

Jim Kennedy, who serves as SVP Strategy and Enterprise Development at the Associated Press, comments on matters of creativity and productivity, while also providing a strategic perspective: he remarks that generative AI will “spark a massive new wave of creativity along with major productivity boosts” but notes that we need to “move quickly from experimentation to implementation” in order to realize the biggest possible gains.

transformation task

At Effixis, we drive this exact transformation from experimentation to implementation, both internally and with our clients. Our developers and data scientists have weighed in on their own productivity gains within various tasks, some of which we highlight below:

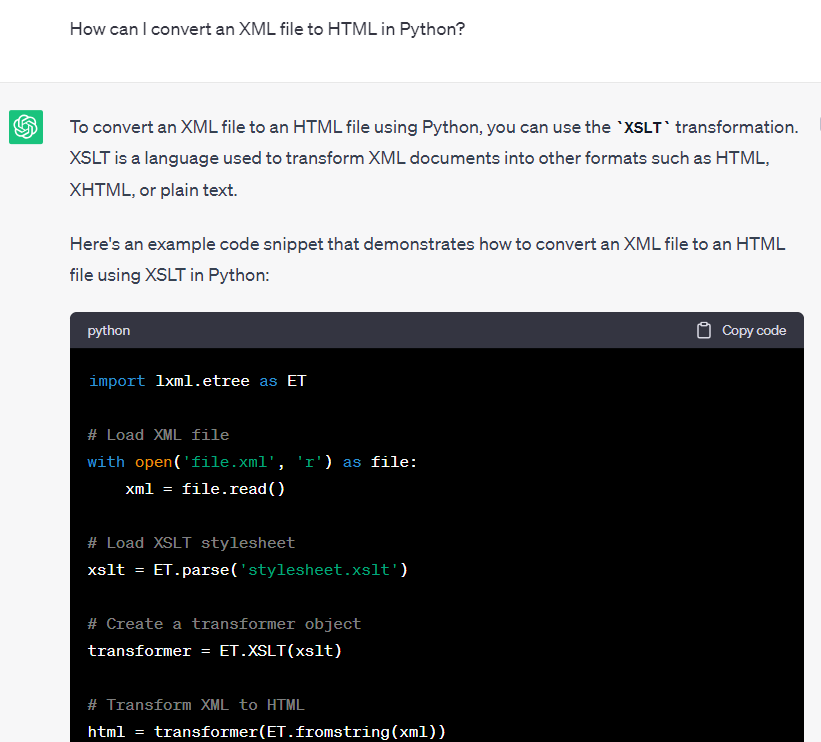

Streamlining development tasks: debugging assistance, explaining snippets of code, generating code in various settings (terminal commands for file manipulation and package management, Python code for data manipulation in the pandas library and plots in Plotly), synthetic data generation

Information retrieval: getting assistance to navigate applications, generating cosmos database queries to show articles in the Azure portal, expanding on knowledge for more niche topics in natural language processing

Business-oriented tasks: finding a list of specific features we could imagine a client would be interested in, generating detailed reports based on technical findings

In these examples, what makes LLMs so powerful as an enabler for productivity?

The conversational format, for starters. One of our data scientists notes, “in contrast to a search engine, I can ask follow-up questions, and I can ask about multiple topics that only slightly overlap.”

With our clients, we have supported their journey to adapt LLMs in their environments by leveraging existing technologies such as Azure. Its recent introduction of an OpenAI and Meta service allows organizations to use powerful language models without compromising on compatibility and security. These types of integrations have allowed us to develop AI solutions for clients in various sectors, such as healthcare, banking, manufacturing, and defense, in a manner that respects matters of data governance. In these settings, LLM-powered agents – internal chatbots being a key example – can boost productivity by allowing employees to explore diverse knowledge bases across multiple departments.

Overall, many research findings and real-world applications highlight the substantial productivity gains achievable through LLM utilization. By swiftly transitioning from experimentation to implementation, organizations can leverage the conversational nature of LLMs to unlock these gains. Effixis, as a proponent of this transformative shift, actively drives the implementation of LLMs internally and with its clients. Let us join you on the journey towards a more productive future.

Sources