What if dashboards, as we know them, will soon be a thing of the past? The advent of Large Language Models such as GPT-3 (ChatGPT) enables you to interact with your data simply through a prompt, removing the need for internal or client-facing dashboards and reporting. Our CEO Rémi Sabonnadiere, and Head of AI Zachary Schillaci PhD, have written an article showcasing a practical walkthrough, with a concrete example of how prompt will replace dashboards. If you want to assess the potential of GPT-3 and other LLMs for your company, we would be glad to assist you!

Image: Courtesy of DALL-E

Dashboards, as we know them, have been a staple of data visualization and analysis for years. They allow organizations to access important data, make decisions based on that data, and track progress over time. However, with the advent of Large Language models (LLM), it’s possible that dashboards, as we know them, will soon become a thing of the past.

Imagine if you could build a ChatGPT-like platform to interact and conversationally inspect your company data – asking for a KPI, a plot, or qualitative analysis without any programming! Is that too good to be true? Well, we tested it out.

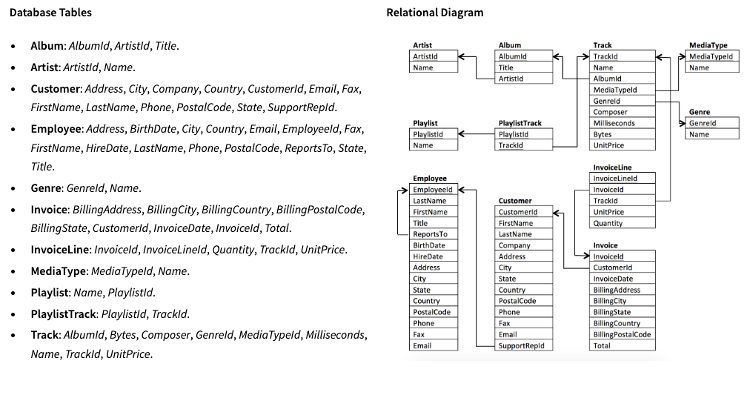

We built an application using OpenAI’s well-known LLM GPT-3 (the “brains” behind ChatGPT) to translate natural language questions into executable SQL queries. For demonstration purposes, we took a sample database describing a digital media store (remember iTunes?). The database includes a total of 11 tables which are connected by the relational diagram shown below.

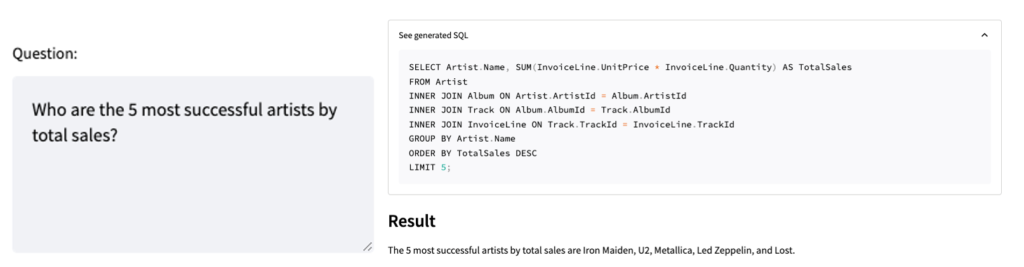

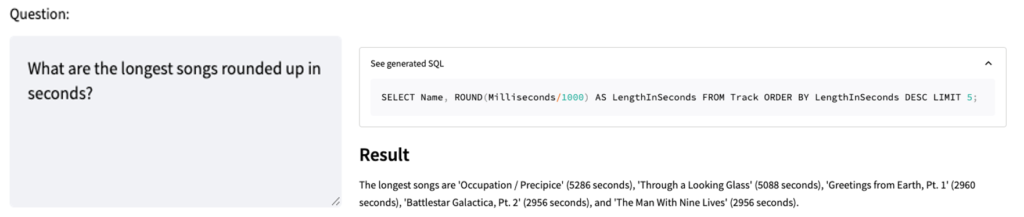

Through clever prompt engineering and additional Natural Language Processing, we describe the database schema to GPT and require a response in the form of an SQL query. Then we build a simple chat interface to allow the user to ask questions about the data directly to GPT. Once we translate it into SQL, we can run the query over the database and tell GPT to formulate the response back to us in natural language. Look at some of the examples below!

Note the power of this approach; even though we aren’t asking for tables or columns explicitly by name, GPT understands how to correctly write its response given the reference schema.

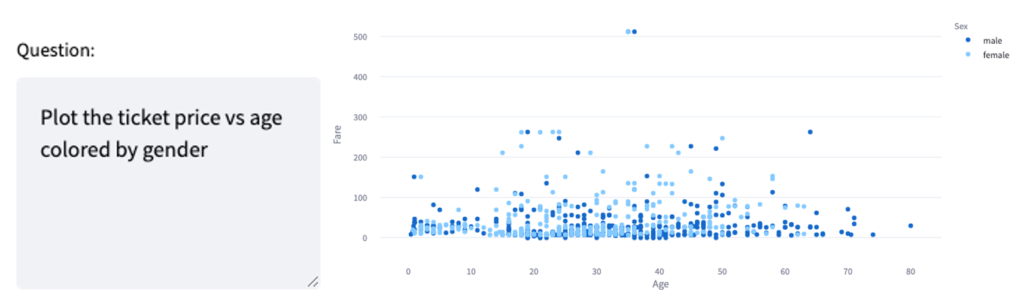

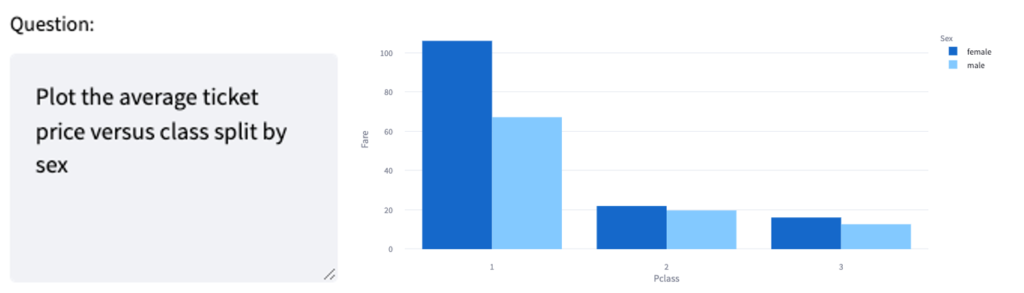

Going a step further, we focus on a single dataset to highlight data analysis and modelling capabilities. We chose the famous Titanic passenger dataset, which contains a detailed account of all the passengers onboard the Titanic – including their name, gender, age, and fare. In this demonstration, we can directly ask for the data’s visuals. Look at some examples below!

The above example is quite impressive as we asked for the “ticket price,” which is named “Fare” in the database, but the system has understood the column’s meaning and can map it to synonyms or relevant descriptions in a robust way.

Once again, GPT can correctly map the variables mentioned in our question to the corresponding ones in the dataset. It can also handle data transformations and aggregations along the way (e.g., average, sum, etc…).

Another advantage of this approach is empowering data analysis on top of data retrieval. Indeed, it is now possible for individuals with no programming knowledge to perform exploratory data analysis and statistical modelling. For example, instead of writing code to perform a regression analysis, a user can ask the language model: “Can you perform a linear regression on my data and show me the results?” The language model then generates the appropriate code and formats the output for the user, giving them more time to study the results and extract insights. For people with some programming skills, such an approach would remain interpretable and useful as they could access and inspect the code and further modify it if need be.

I am not a digital media store, nor interested in Titanic data. Can it help my company?

Any company using dashboards to make more informed decisions, or reporting can benefit from this approach because of several reasons:

- Immediate response: Such prompts can be used on the fly, in board meetings, or other times to answer advanced questions without having to prepare advance dashboards or reports that could take weeks of efforts, thereby increasing the company’s agility.

- Reduced user training and data upskilling: While upskilling on the fundamentals of AI will become even more critical, upskilling on the basics of data manipulation (basic Python, R, SQL, or PowerBI) will become unnecessary for most users. As ChatGPT showed, the adoption of the conversational bot is very intuitive and powerful.

- Increased visibility: While a dashboard must be designed based on future users’ requirements, needs, and personas, a prompt will adapt to the user and the context, drastically reducing the number of different sources of information for the company.

The above advantages already cover a wide spectrum of enterprise applications. Still, companies and public organizations can also leverage it to create intelligent agents to improve their customer experiences by taking chatbots and robot advisors to the next level or powering digital twins to test marketing hypotheses and many more.

OK, but what about privacy?

One question you may have is regarding data privacy – should I be sharing my data with LLMs, such as those provided by OpenAI? This is an important question to consider if you work in a data-sensitive field. There are two ways to address this problem.

- Isolation: Separate your database contents from the LLM itself and only share your database metadata (e.g., table and column names). This is what we have done for our proof-of-concept demonstration, except for the final step, where the LLM formulates the output in natural language. If data privacy concerns you, the final step can just be skipped, and the query results returned directly.

- Self-hosted LLM: Use an open-source LLM (e.g. Google’s FLAN-T5) hosted on your secure premises. This solution allows you to use the LLM to its full potential without worrying about data privacy issues, as the model will be hosted entirely on-site.

We can develop either solution – or any alternative – to suit your specific needs!

LLM-powered technology has the potential to revolutionize the way organizations interact with and make decisions based on their data. It will help organizations quickly access the insights they need to make informed decisions by eliminating the need for dashboards. While there may still be a place for dashboards in certain circumstances, the future of data analysis and decision-making will certainly be shaped by LLM technology.

Interested in a demo? Reach out to us!